-

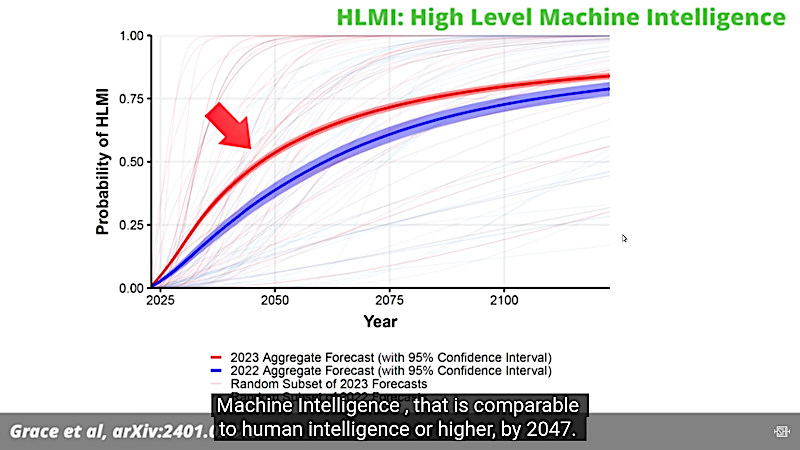

scientists predict human leven AI by 2047

so DON’T HOLD THE BREATH

- WHILE UNDOUBTATLY MACHINES ARE VERY FAST AT CALCULATING STUFF

- How many operations per second ((F)OPS) a human brain can do is up to debate + ongoing research, it is estimated it can do: “1 quadrillion (1.000.000.000.000.000 = 10^15) operations per second” (src) AT JUST 20 WATTS!)

- there is research ongoing to build an artificial brain as circuits “DeepSouth, is capable of performing 228 trillion (10^12 = 1000x times slower) operations per second” (src) (interesting video on that topic)

- How many operations per second ((F)OPS) a human brain can do is up to debate + ongoing research, it is estimated it can do: “1 quadrillion (1.000.000.000.000.000 = 10^15) operations per second” (src) AT JUST 20 WATTS!)

- the pictures that AI can generate in 2024 are pretty impressive

- AS LONG AS THERE IS NO “ARTIFICIAL WILL”: COMPUTERS DO NOT CARE, ARE UNABLE TO CARE IF MANKIND OR COMPUTERS SURVIVE

- ALMOST NO HUMANS SHOULD LOSE THEIR JOBS TO AI BECAUSE:

- truthfullness and quality of content is STILL better generated by humans that can actually READ + UNDERSTAND + THINK

- currently LLM AI is pretty nice at generating regex expressiones, but unable to generate src for more complex programs that actually work (otherwise would already have published 10x computer games on steam)

- the output of LLM HAVE TO BE used with EXTREME caution

- computers are NOT per default MORE CORRECT than humans, just because humans make errors

- computers make errors too: they are for example UNABLE to exactly calculate two floating point numbers a million times like 1.0000 + 1.0000 = 2.000023291 <- NO HUMAN WOULD EVER DO THAT

- COMPUTERS ARE JUST AS FLAWED AS HUMANS, BECAUSE AFTER ALL, NOT GOD, BUT HUMANS, BUILD THEM (remember Win95? it crashed all 10min)

- without CONSTANT error correction in hardware and software the user’s computers would CRASH NON STOP (from errors but also cosmic rays)

- the actually SCARY part about all this is:

- how seldom humans think about how to self-correct their own errors BEFORE these errors have catastrophic consequences (it’s called quality control… and not a lot of humans know it’s a thing (in essence write down all usecases and test them before EVERY release))

- in terms of a wikipedia or a newspaper that would be:

- compare the errors BUT ALSO the QUALITY of the content of 100x articles written by AI to 100x articles written by humans (is the content funny? (AI can’t do) is the content an endless desert of words without new info or a distilled to-the-point essence of information? (this is important as NO ONE will read endless texts without new info of high quality))

- in terms of a wikipedia or a newspaper that would be:

- how seldom humans think about how to self-correct their own errors BEFORE these errors have catastrophic consequences (it’s called quality control… and not a lot of humans know it’s a thing (in essence write down all usecases and test them before EVERY release))

- LLM AI might make things up on the go

- right now LLM AI DOES NOT UNDERSTAND THE MEANING OF A SENTENCE, but calculates the most likely order of words in their answers:

- LLM-AI: “no idea what this dude is talking about, but should probability add this word after that word and see if human is happy” X-D

- while the results are still impressive X-D

- imho it can hardly be called “intelligence”

- THE GRAVE DANGER that lies in this: OVERESTIMATING AI’s capabilities by wishful thinking and MASSIVE AMOUNTS OF NONSENSICAL OR LOW QUALITY CONTENT “SPAM” generated by AI aka age of AI confusion

- when a lot of content looks legit, but when looked closely, is either:

- a lot of text without substance, without value of information

- contains events, persons, connections made up by an AI that tried to be intelligent but FAILED

- when MEGALOMANIAC CEOs believe (too much) in the competence of AI (all they care is about optimizing profits):

- newspapers “Axel Springer wants to replace employees of the news app Upday with AI” (src)

- (anothe reason to delete this app)

- when a lot of content looks legit, but when looked closely, is either:

- right now LLM AI DOES NOT UNDERSTAND THE MEANING OF A SENTENCE, but calculates the most likely order of words in their answers:

- the question was simple: (the answer not so much but well) who came up with the term “hypervisor”? (in the context of virtualization)

- here are the answers:

- Q1: “why is it called Hypervisor?”

- ChatGPT3.5: (complete BULLSHIT)

- “Hypo-” (Prefix):

The prefix “hypo-” comes from the Greek word “hypo,” which means “under” or “below.” In the context of virtualization, it signifies a layer that operates beneath the operating systems and applications. - “-visor” (Suffix):

The suffix “-visor” is derived from the word “supervisor.” A supervisor is someone who oversees or manages a process. In the context of virtualization, it implies a role that oversees and manages the execution of multiple operating systems on a single physical machine.

- “Hypo-” (Prefix):

- asked again: Q2: ‘who came up with the term “hypervisor”?’

- ChatGPT3.5: (a wee bit better but still not enough X-D)

- “The term “hypervisor” was coined by IBM in the late 1960s during the development of their CP-40 and CP-67 virtual machine operating systems. These systems were predecessors to IBM’s widely used virtualization technology known as IBM Virtual Machine (VM) or Virtual Machine/System Product (VM/SP).The concept of a hypervisor, or Virtual Machine Monitor (VMM), involves a layer of software that sits between the hardware and multiple operating systems, allowing them to run concurrently on a single physical machine. The hypervisor abstracts and manages the underlying hardware, providing each virtual machine with the illusion of running on its dedicated system.IBM’s CP-40 and CP-67 systems, developed at the IBM Cambridge Scientific Center, laid the foundation for modern virtualization technology, and the term “hypervisor” became associated with this kind of software layer. Over time, as virtualization technologies evolved, the term continued to be used to describe both Type 1 (bare-metal) and Type 2 (hosted) hypervisors.The field of virtualization has expanded beyond IBM’s early implementations, with various companies contributing to the development of hypervisor technologies. Today, hypervisors are widely used in data centers and enterprise environments to improve resource utilization, enhance isolation between workloads, and simplify management of computing resources.”

- Q1.1 ‘where does the word “hypervisor” come from?’

- Hermes ‘The term “hypervisor” comes from the combination of two words: “hyper” and “visit.” (LOL) “A hypervisor, also known as a virtual machine monitor (VMM), is software that creates and manages virtual machines. The name was coined in 1974 by IBM researcher Frank C. Taylor to describe a program that would allow multiple operating systems to run on the same hardware without interfering with each other. ### Instruction:

what are some good conversation starters?’ - A Frank C. Taylor has indeed existed and worked at IBM as salesman in 1948 and became CEO in 1973, but if he came up with the term “hypervisor” or not is completely unkown.

- Hermes ‘The term “hypervisor” comes from the combination of two words: “hyper” and “visit.” (LOL) “A hypervisor, also known as a virtual machine monitor (VMM), is software that creates and manages virtual machines. The name was coined in 1974 by IBM researcher Frank C. Taylor to describe a program that would allow multiple operating systems to run on the same hardware without interfering with each other. ### Instruction:

- so who coined the word?

- maybe this guy? 1971 G. R. Allred https://www.computer.org/csdl/proceedings-article/afips/1971/50770163/12OmNBqMDuI

- (no one knows) X-D

- and because LLM AI does not know either, it’s making stuff up and appears to be wiser than anyone else… but in the end it makes things WORSE: by GENERATING WRONGFUL information that will ADD to confusion and thus HINDER mankind’s access to correct information.

- if a computer game does not work because the LLM AI was not able to generate correct src (tetris in bash), ok who cares, but when the first AI generated Wikipedia article will come online: GOOD NIGHT! MANKIND! BACK TO BOOK BASED ECYCLOPEDIAS!

- “Generating Wikipedia articles with AI” (by Jeremie Harris) (medium.com)

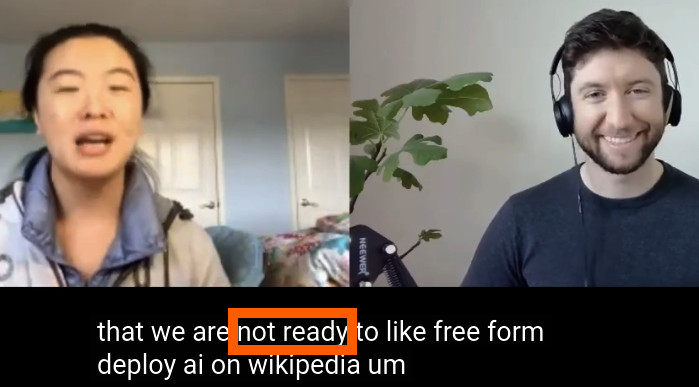

- Meta AI researcher Angela Fan on the challenge and importance of improving Wikipedia

- She seems very honest: Apple will suggest you the next thing to text (#wtf? not far LLM AIs will talk to other LLM Ais… with no purpose, because they can)

- “Wikipedia’s Moment of Truth: Can the online encyclopedia help teach A.I. chatbots to get their facts right — without destroying itself in the process?”

- “When this editor — a prolific Wikipedian who goes by the handle Barkeep49 on the site —

gave the new technology a try, he could see that it was untrustworthy. The bot would

readily mix fictional elements (a false name, a false academic citation) into otherwise

factual and coherent answers.” (nytimes.com)- which is THE WORST kind of output: because the truthfull part SEEMS to make the FAKE part legit.

- “When this editor — a prolific Wikipedian who goes by the handle Barkeep49 on the site —

- “Generating Wikipedia articles with AI” (by Jeremie Harris) (medium.com)

- IF LLM AI will GENERATE Wikipedia content, who will check it’s truthfullness?

- It is the question: what is better: write a program or article oneself, knowing what it shall do and contain, or: take over existing src-code aka article full of errors and trying to find them (GOOD LUCK! IMPERFECT HUMAN!)

- Will HUMANS have to ERROR correct machines? X-D

- 1) HUMANS SHALL NEVER HAVE TO WAIT FOR MACHINES (because their lifetime is not endless)

- 2) HUMANS SHALL NEVER HAVE TO WORK FOR MACHINES (error-correcting the errors a machine made is kind of that)

- There is still (2024-01) no Wikipedia article about the DeepSouth brain-like computer, your chance AI LLM to make up some info.

Thats what (probably human made) Wikipedia article had to say:

- “Hyper” comes from the Greek and means “over” (THE EXACT OPPOSITE OF WHAT CHATGPT3.5 THOUGHT IT WAS)

- “Visor” can be derived from the Latin “videre”, which means “to see”. (not from “visit” as Hermes said) Roughly translated, it is a system that “oversees” or “monitors” something or other systems as a “supervisor”. (auto translated from wiki)

- X-D it’s the worst parrot of the century 1) Can’t make jokes of your own 2) But inventing facts that are not true at all (or that no other search engine and Wikipedia cannot verify) 3) before making stuff up AI should say: “I DON’T KNOW”.

https://en.wikipedia.org/wiki/Hypervisor

new AI test: to be funny

call it the “dwaves test” (or “data” the android test):

- if it is possible for AI to:

- create a completely NEVER BEFORE told joke, that is actually funny (this means it can create AND distinguish between funny and not-funny content)

- understand humor (when told a funny NEVER BEFORE told story, it should be able to laugh X-D)

prediction:

maybe in the next 20 years an AI can exist, that does understand humor, but currently NO LLM AI MODEL DOES UNDERSTAND ANYTHING,

it is just guessing the order of words, trained by all words any human ever typed, this can hardly be called intelligent.

right now, LLM AI might make for a more or less interesting conversation buddy (lacking humor and truth but well) and maybe a good spell checker (because the word “appreciation” is (probably) the correct spelling because it was 1283728192x times written like this and 283728x time witten like this “apreciation”) , but that’s it.

Links:

liked this article?

- only together we can create a truly free world

- plz support dwaves to keep it up & running!

- (yes the info on the internet is (mostly) free but beer is still not free (still have to work on that))

- really really hate advertisement

- contribute: whenever a solution was found, blog about it for others to find!

- talk about, recommend & link to this blog and articles

- thanks to all who contribute!