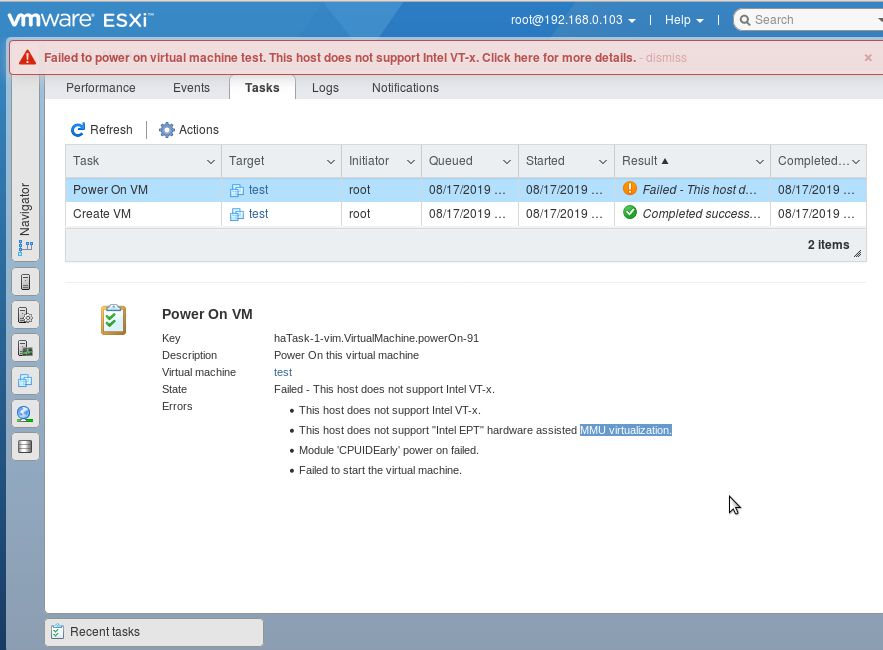

running esxi inside virtualbox vm is just a test, to play with it, unfortunately one will not be able to start vms on it, because of the lack of nested virtualization. (could/should work nested inside quemu-kvm?)

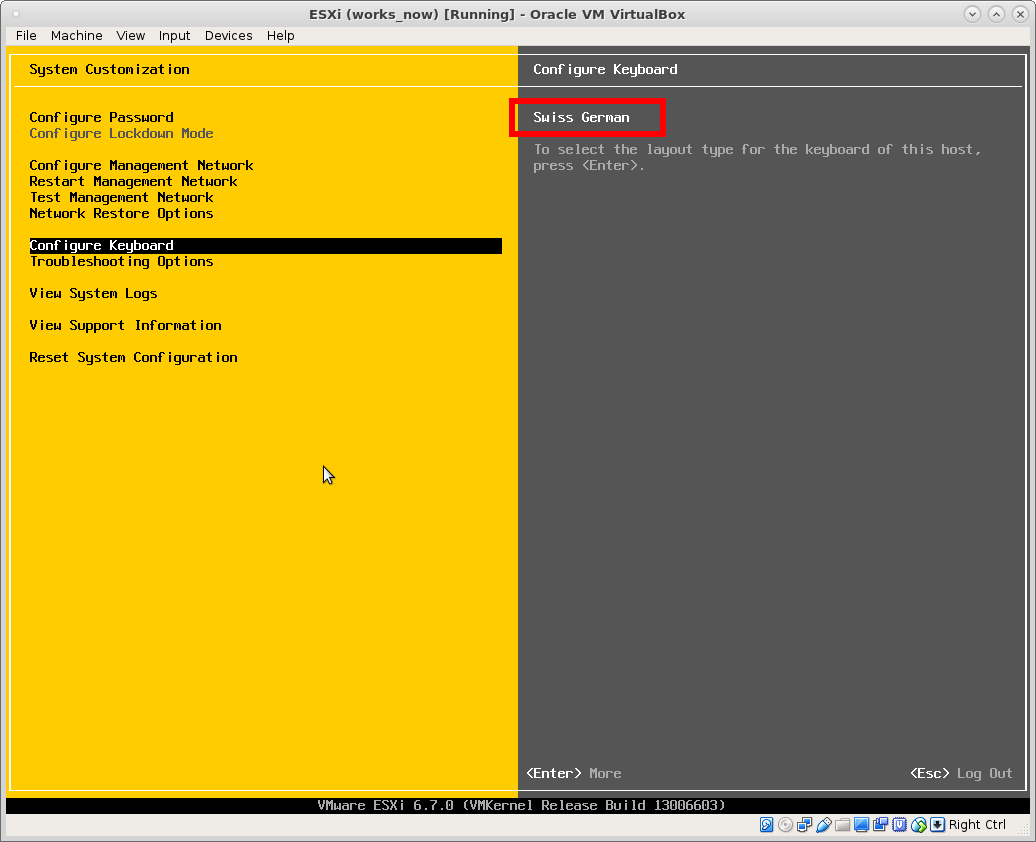

the problem: should have left the keyboard layout as “Default US” during setup.

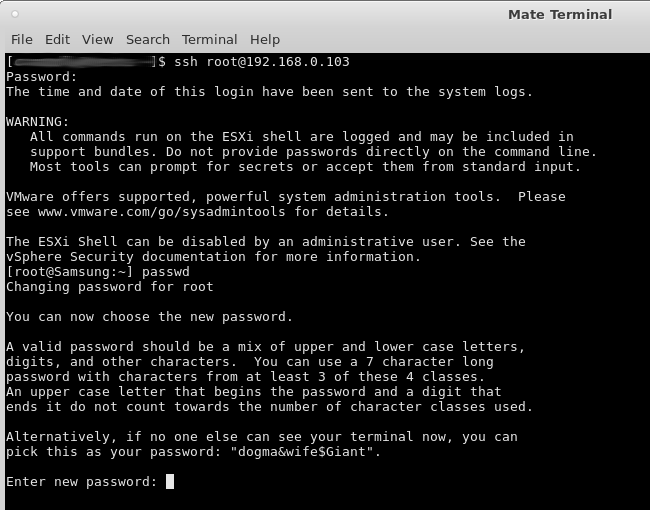

Now all passwords one enters into the esxi console are probably not the same, as when one switches over to ssh terminal or web browser.

solution: either reinstall the host with “Default US” keyboard and do a password that is the same on US and German keyboard layout (example try:

dfghj123.

so: keep changing the password. until one finds one that is the same in both keyboard layouts – happy hacking! 🙂

no it’s not one’s fault – its just a keyboard layout issue problem.

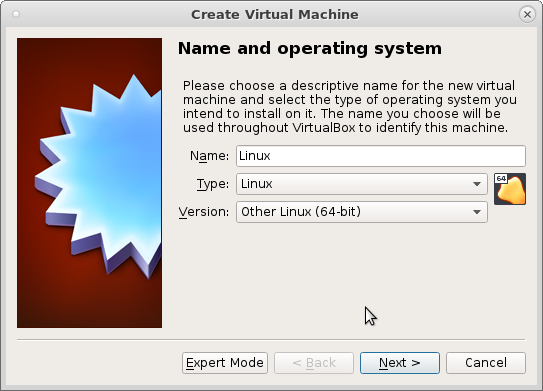

to make it run, create a new virtualbox vm, “Other Linux”

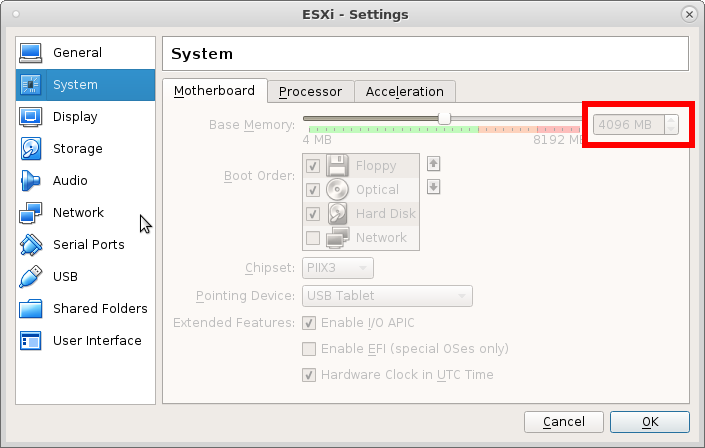

give it at least 4GBytes of RAM or it will complain

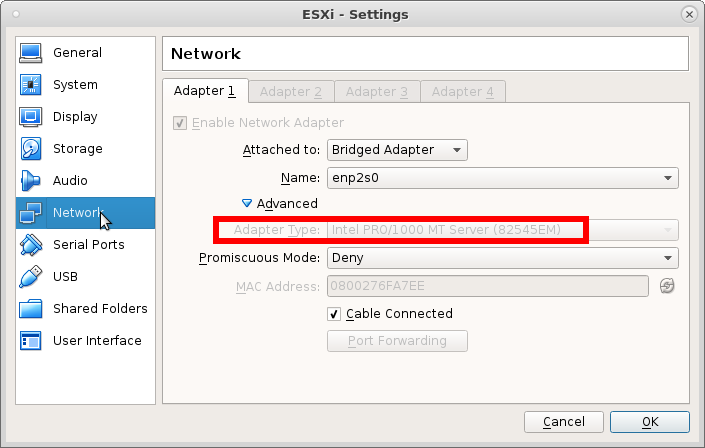

give it this NIC

or it will give you the “pinky” of “pink death” saying it can not decompress sb.v00

also: first harddisk 10GByte is enough, second harddisk (2TB) as storage for the vms this host will host.

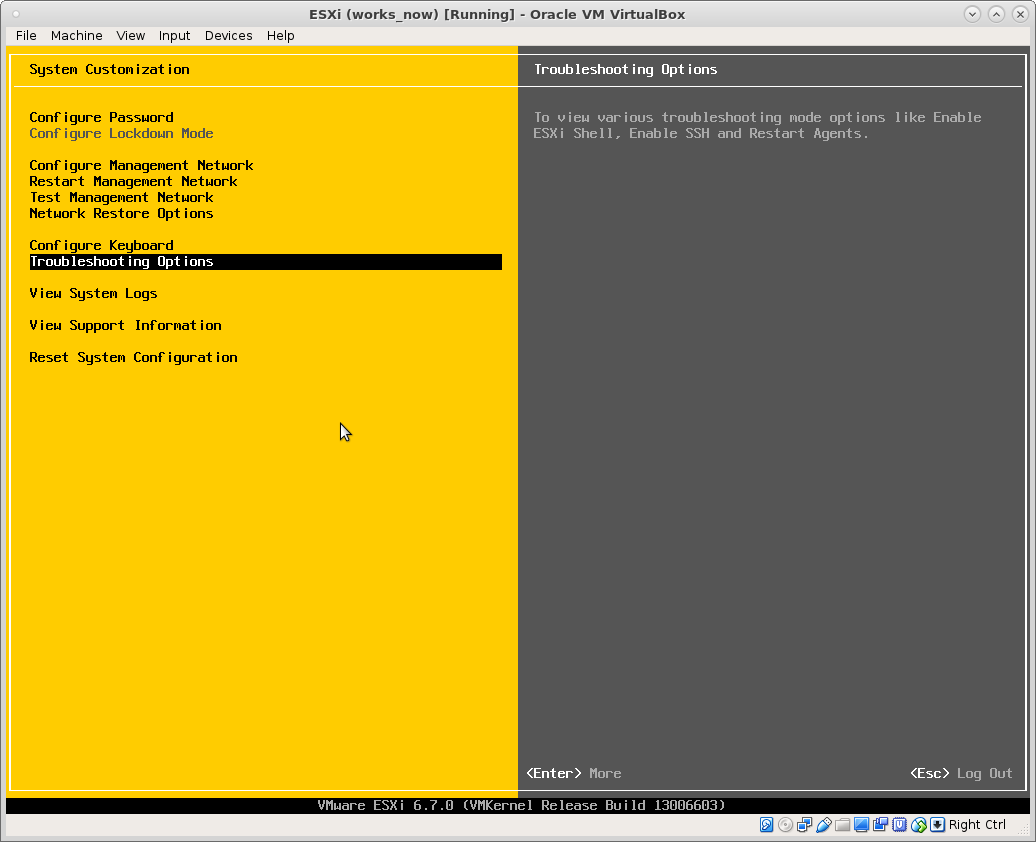

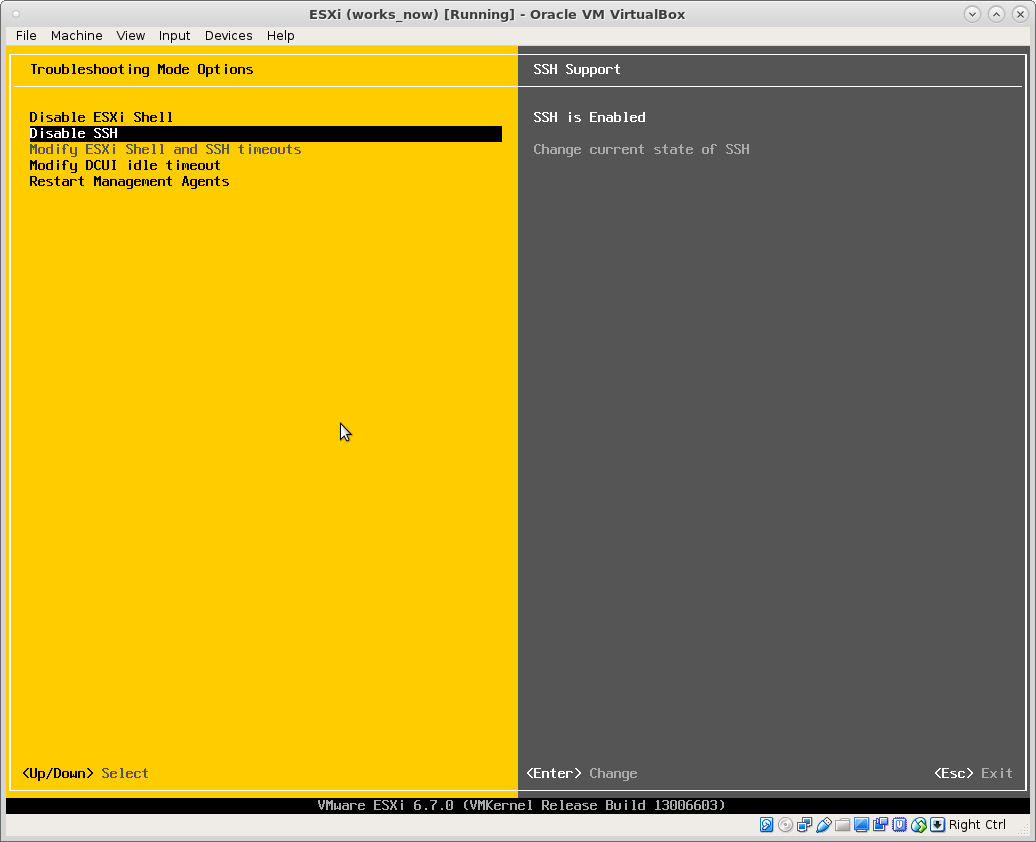

enable ssh login:

after one managed to ssh login, one shall use passwd immediately to change the password to the one one wanted originally.

and avoid future confusion.

esxi is very creative in suggesting passwords. (that this way will never work in any other keyboard layout X-D)

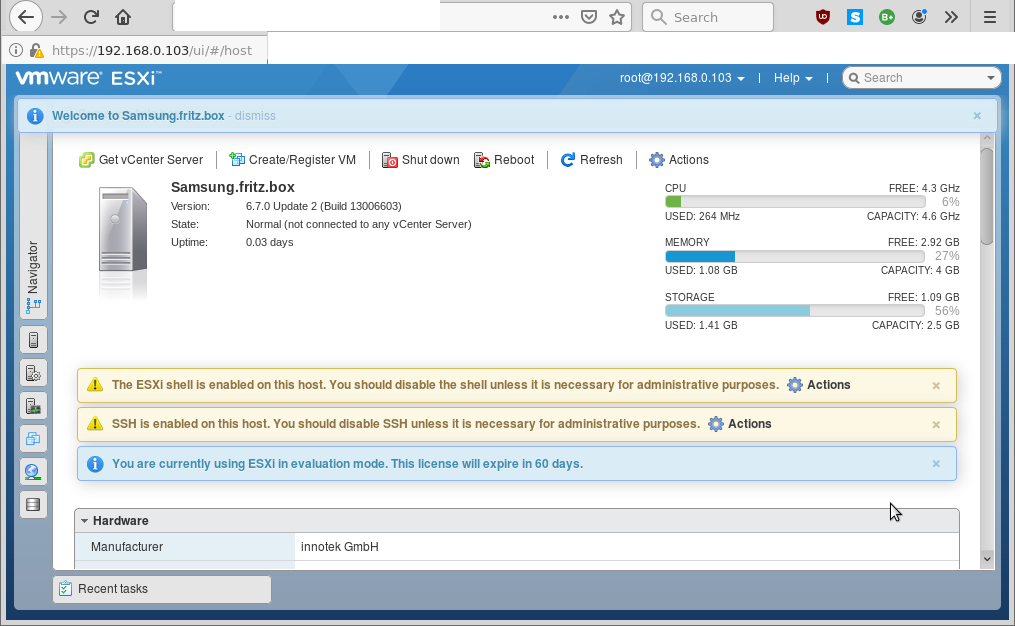

now also the web gui dcui login shall not be a problem: (accept self signed SSL https certificate)

how the funny domain came together one can only guess (stored by the DSL router and inherited from a mobile phone)

there used to be a windows based desktop gui to manage vms, named “vSphere Client” https://my.vmware.com/web/vmware/info?slug=datacenter_cloud_infrastructure/vmware_vsphere/6_0

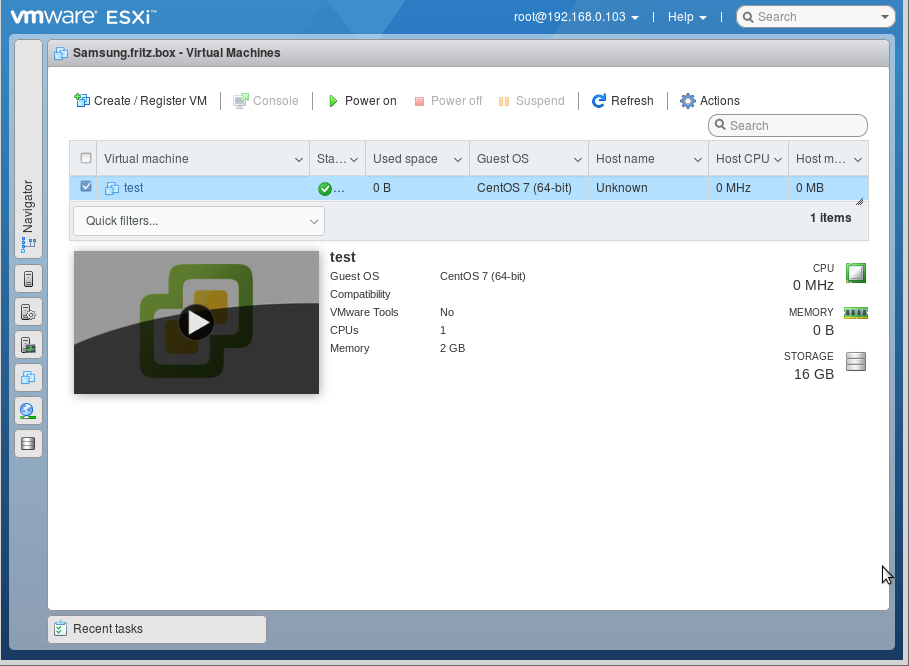

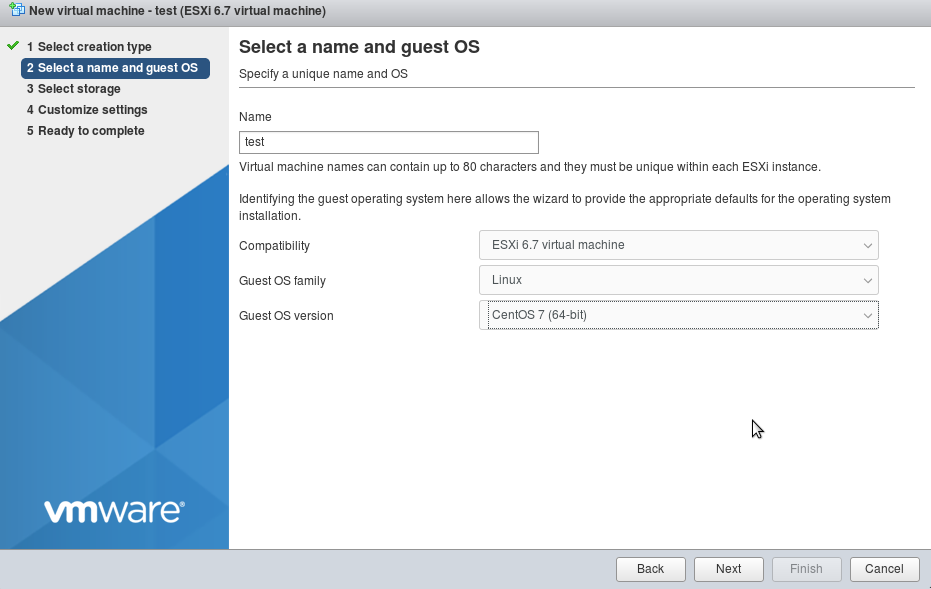

but it seems vmware has gone more web based. one can now completely generate a vm via the web gui:

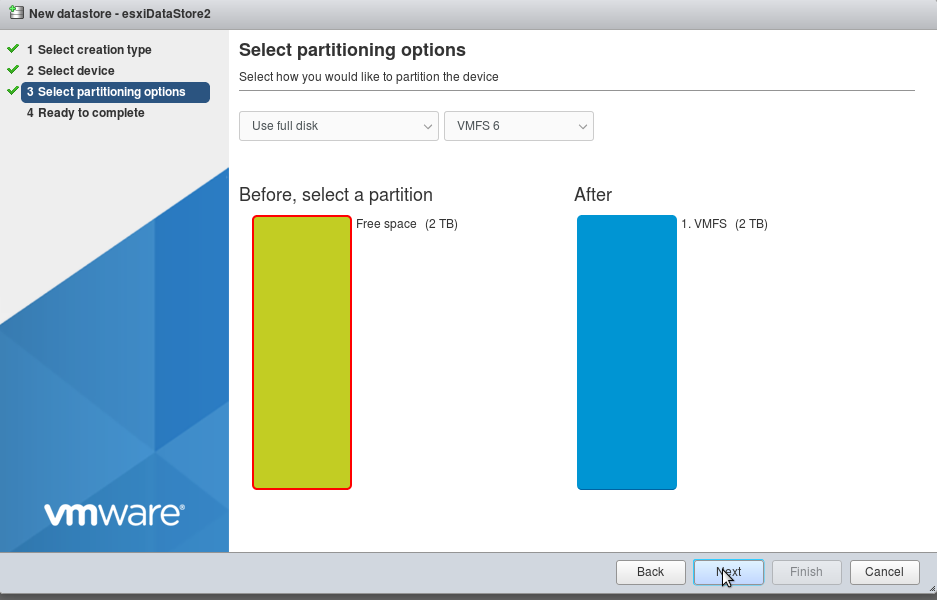

create new datastore on the second harddisk (can delete on the first)

about IOMMU:

“So long story short, the only way an IOMMU will help you is if you start assigning HW resources directly to the VM. Just having it doesn’t make things faster.

It would help to know exactly what Motherboard/CPU is advertising this feature. IOMMU is a system specific IO mapping mechanism and can be used with most devices.

IOMMU sounds like a generic name for Intel VT-d and AMD IOV. In which case I don’t think you can multiplex devices, it’s a lot like PCI passthrough before all these fancy virtualization instructions existed :). SR-IOV is different, the peripheral itself must carry the support. The HW knows it’s being virtualized and can delegate a HW slice of itself to the VM. Many VMs can talk to an SR-IOV device concurrently with very low overhead.

The only thing faster than SR-IOV is PCI passthrough though in that case only one VM can make use of that device, not even the host operating system can use it. PCI passthrough would be useful for say a VM that runs an intense database that would benefit from being attached to a FiberChannel SAN.

Getting closer to the HW does have limitations however, it makes your VMs less portable for deployments that require live migration for example. This applies to both SR-IOV and PCI passthrough.

Default virtualized Linux deployments usually use VirtIO which is pretty fast to begin with.”

(src)

while vmware is surely a rock solid solution, vmware has an confusing amount of tools to say the least.

- Datacenter & Cloud Infrastructure

-

VMware vCloud Suite Platinum

-

VMware vCloud Suite

-

VMware vSphere with Operations Management

-

VMware vSphere

-

VMware vSAN

-

VMware vSphere Data Protection Advanced

-

VMware vSphere Storage Appliance

-

VMware vSphere Hypervisor (ESXi)

-

VMware vCloud Director

-

VMware vCloud NFV

-

VMware vCloud NFV OpenStack Edition

-

VMware Validated Design for Software-Defined Data Center

-

VMware vCloud Availability for vCloud Director

-

VMware Cloud Foundation

-

VMware vSphere Integrated Containers

-

VMware vCloud Usage Meter

-

VMware vCloud Availability

-

VMware vCloud Availability for Cloud-to-Cloud DR

-

VMware Cloud Provider Pod

-

VMware Skyline Collector

-

VMware vCloud Director Object Storage Extension

- Infrastructure & Operations Management

-

VMware vRealize Suite

-

VMware vRealize Operations Insight

-

VMware vRealize Operations

-

VMware vRealize Operations for IBM Power Systems

-

VMware vRealize Automation

-

VMware vRealize Code Stream

-

VMware vRealize Business for Cloud

-

VMware vRealize Network Insight

-

VMware vRealize Log Insight

-

VMware Integrated OpenStack

-

VMware Smart Assurance

-

VMware Smart Experience

-

VMware vRealize Hyperic

-

VMware Site Recovery Manager

-

VMware vCenter Converter Standalone

-

VMware Photon Platform

-

VMware Enterprise PKS

-

VMware Essential PKS

-

VMware vRealize Configuration Manager

- Networking & Security

-

VMware NSX Data Center for vSphere

-

VMware NSX-T Data Center

-

VMware SD-WAN

-

VMware NSX Cloud

-

VMware AppDefense Plugin for Platinum Edition

- Infrastructure-as-a-Service

-

VMware vCloud Air

-

VMware Cloud on AWS

-

VMware AppDefense

- Internet of things [IOT]

-

VMware Internet of things

- Application Platform

-

Pivotal App Suite

-

Pivotal Cloud Foundry

-

Pivotal CF Mobile Services

-

Pivotal CF Services

-

Pivotal GemFire

-

Pivotal GemFireXD

-

Pivotal tc Server

-

Pivotal RabbitMQ

-

Pivotal WebServer

-

VMware vFabric SQLFire

- Desktop & End-User Computing

-

VMware Workspace ONE

-

VMware Horizon

-

VMware Horizon (with View)

-

VMware Horizon Air

-

VMware Horizon Apps

-

VMware Horizon Service

-

VMware Horizon DaaS

-

VMware Horizon Clients

-

VMware User Environment Manager

-

VMware App Volumes

-

VMware Identity Manager

-

VMware Workspace

-

VMware Mirage

-

VMware vRealize Operations for Horizon and Published Applications

-

VMware ThinApp

-

VMware Workstation Pro

-

VMware Workstation Player

-

VMware Fusion

-

VMware TrustPoint

-

VMware Unified Access Gateway

- Cloud Services

-

VMware Cloud Services

- Other

-

VMmark

-

VMware View Planner

liked this article?

- only together we can create a truly free world

- plz support dwaves to keep it up & running!

- (yes the info on the internet is (mostly) free but beer is still not free (still have to work on that))

- really really hate advertisement

- contribute: whenever a solution was found, blog about it for others to find!

- talk about, recommend & link to this blog and articles

- thanks to all who contribute!